What

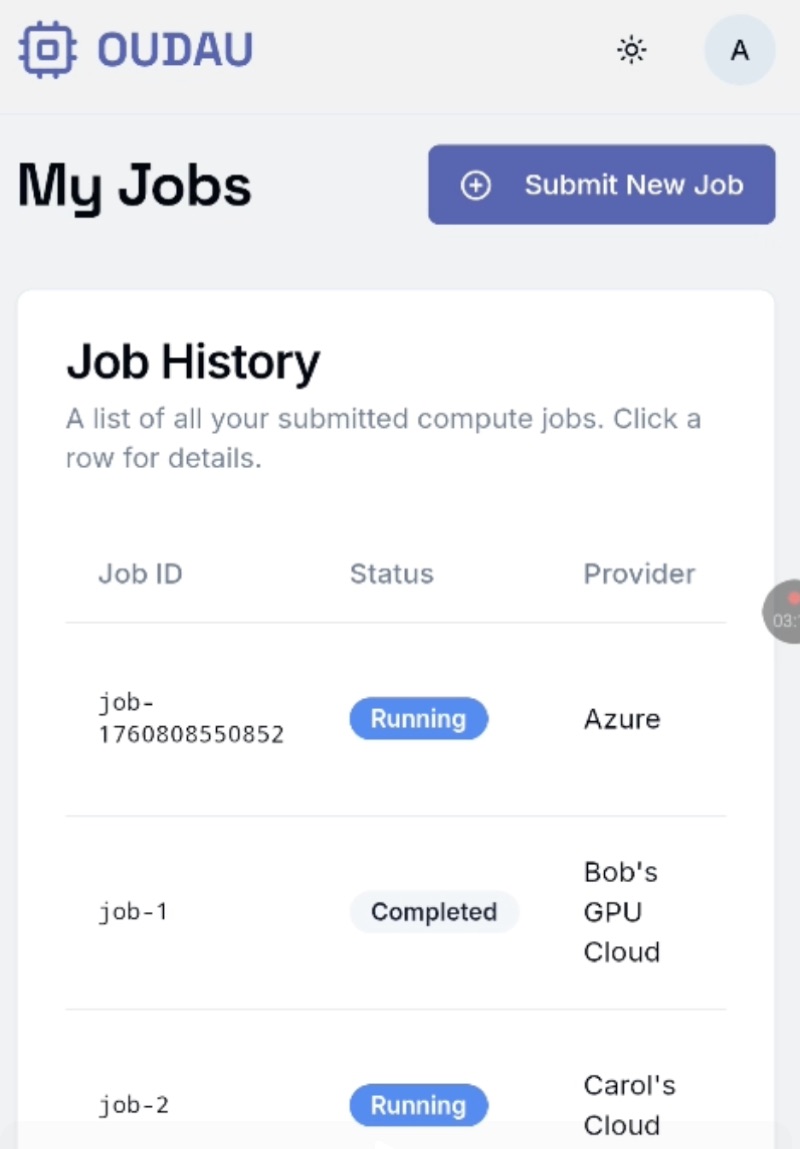

OUDAU was an attempt at building an allocation layer for AI compute. The idea: sit between GPU providers and the practitioners who need capacity, route jobs to the best available price, and send one invoice. A brokerage layer where allocators source, price, and book GPU capacity on behalf of clients. This was the first attack, but in all the idea was a form of an allocation layer.

The MVP was an orchestration tool for deploying and training across multiple clouds at the best price. In some ways a hosted dstack solution. The pitch was that ML teams should focus on building AI and doing research, not infrastructure plumbing.

The project didn't come together. It stayed in research and writing mode, never reaching the product traction needed to sustain it. What remains is the thinking, and two Substack pieces that I think still hold up. I will soon open source a gpu-prices tool.

The Thesis

The framing came from Dan Shipper's allocation economy: if models can increasingly do knowledge work, the scarce skill shifts from doing the work to directing it. The advantage becomes how well you allocate resources, not what you know.

OUDAU was meant to be the layer that enables this. An exchange where allocators, professionals who route compute across venues, could operate. Compute allocation as a first-order problem: deciding what runs where, when, and under what constraints.

Commodities teach one lesson: the financial layer ends up bigger than the physical market. Compute is entering that wave, where liquidity, futures, and hedging matter as much as training AI models. OUDAU is the neutral allocation layer built for this wave.

Compute is often compared to oil, but it's not a storable stock. It's temporal, priced in GPU-hours, consumed over time. Unused capacity is gone. It looks more like electricity than oil.