neural-geometry

Building intuition for neural networks through simple models, visualization, and graphics programming. Exploring ReLU geometry, piecewise-linear regions, and Bayesian uncertainty, built from scratch with NumPy and OpenGL.

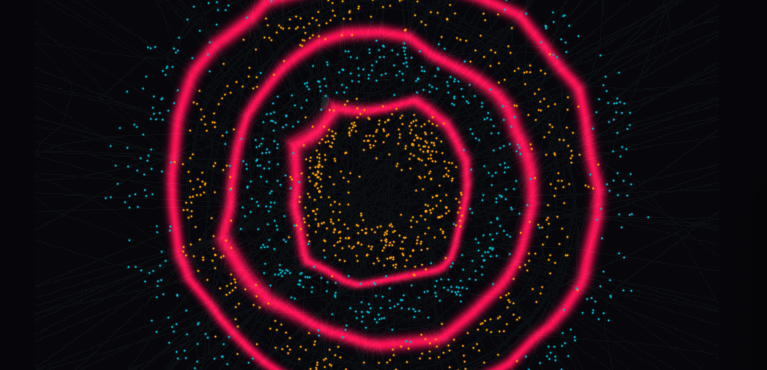

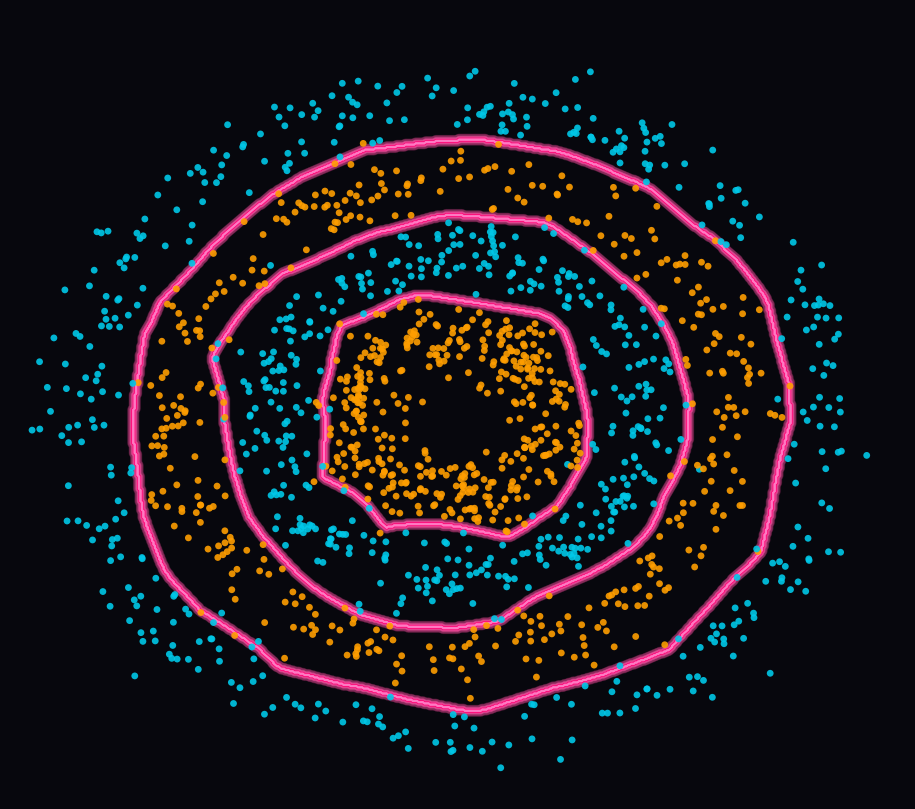

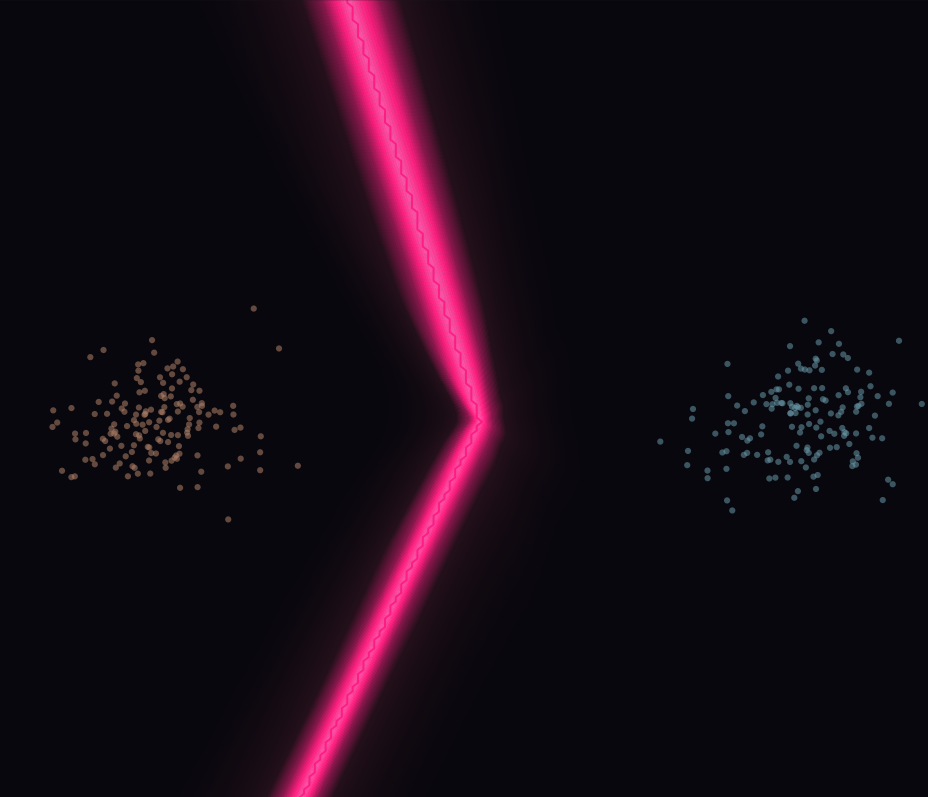

Neural Geometry is a personal project for building intuition around two things: neural networks and graphics programming. The visualizations look at what happens inside trained networks, how they carve up space, where they are confident, and where they are not. The first direction is ReLU geometry: a network trained on a ring-shaped dataset, where you can see the flat linear regions the network creates and watch the decision boundary emerge from them. The second is Bayesian uncertainty: the same kind of network, but with a Laplace approximation on the last layer, so it learns to be uncertain where it should be, confident near training data, uncertain far from it. The graphics side is not incidental, rendering these structures properly is part of what makes the project worth building.

The current projects has some motivating work:

ReLU

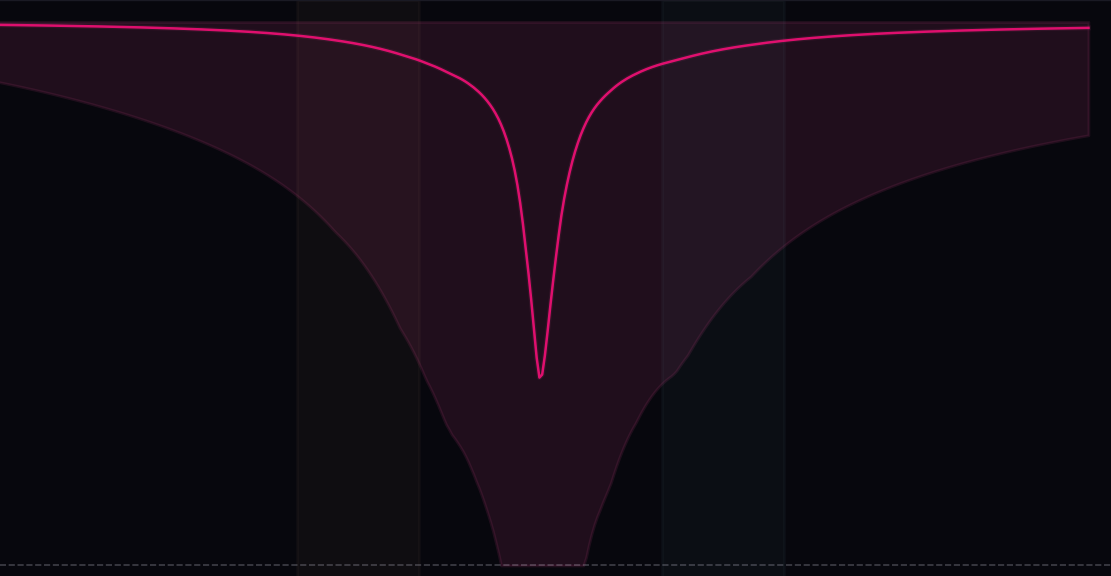

ReLU is an activation function, probably the most widely used one. Think of it as a gate, it decides which neurons fire and which stay silent. That on/off pattern across every neuron defines a region of input space where the network behaves like a single linear function. Different inputs, same pattern of gates firing, same region. The network carves all of input space into these flat linear pieces, and the decision boundary lives at the edges where the regions meet. That structure is what the visualization lets you see and explore directly.

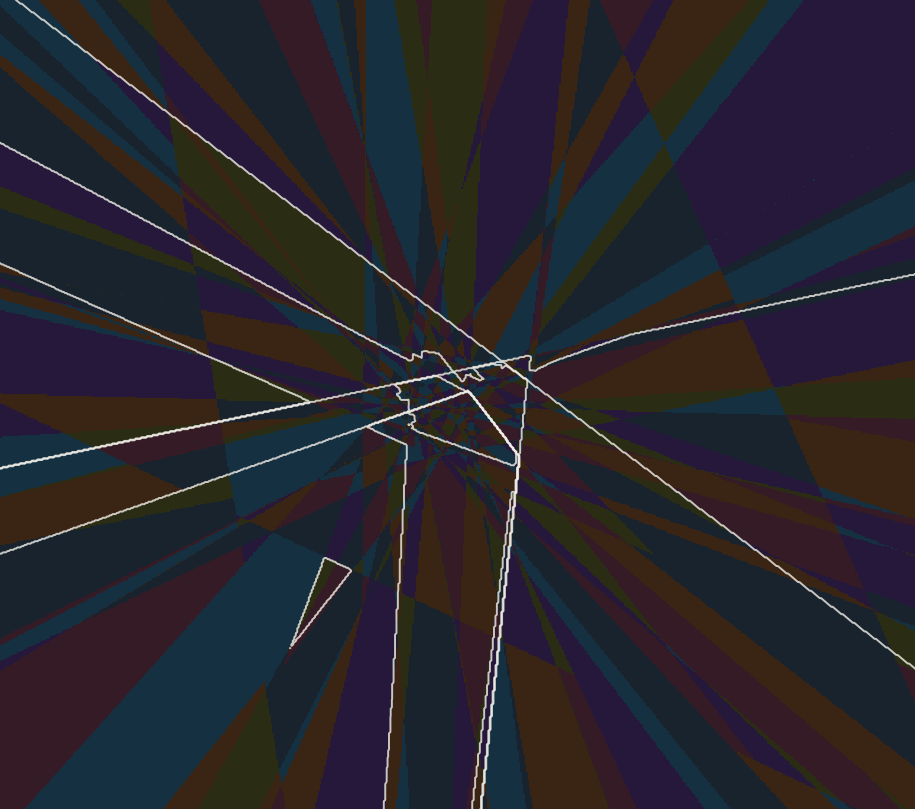

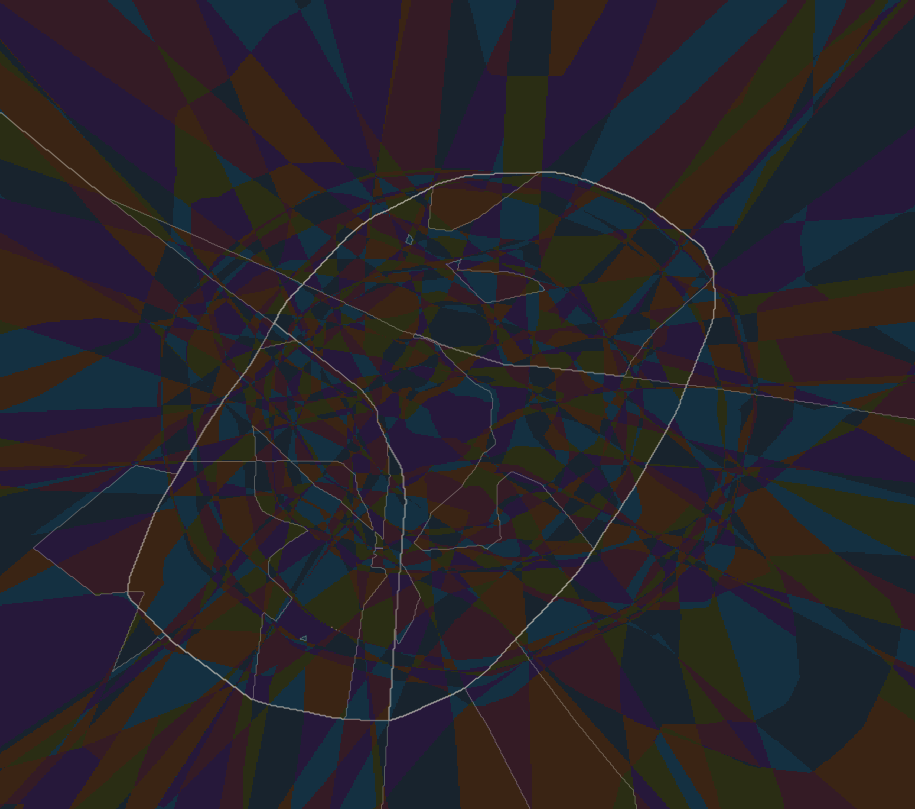

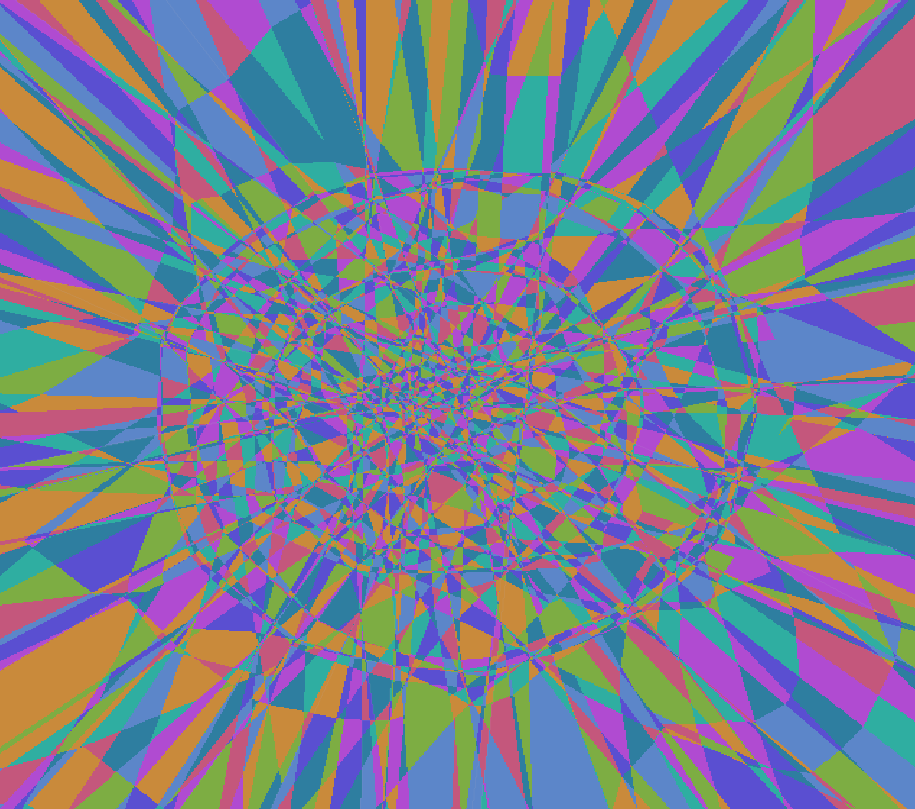

Successive ReLU layers partition the plane into increasingly fine linear regions. The first layer creates coarse radial cuts, the second refines them, and the joint partition is their composition. The decision boundary emerges from that structure.

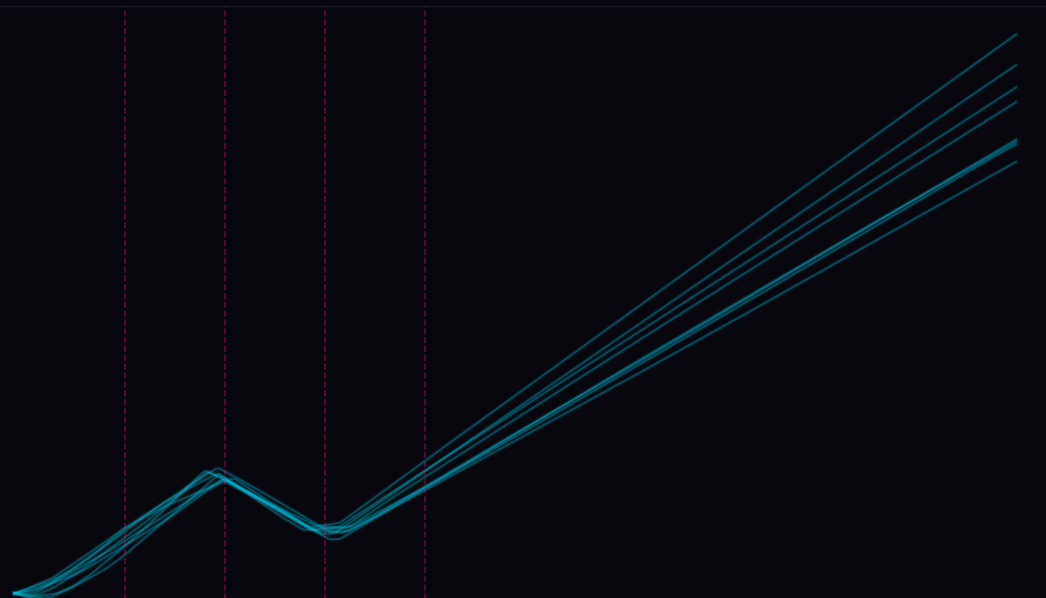

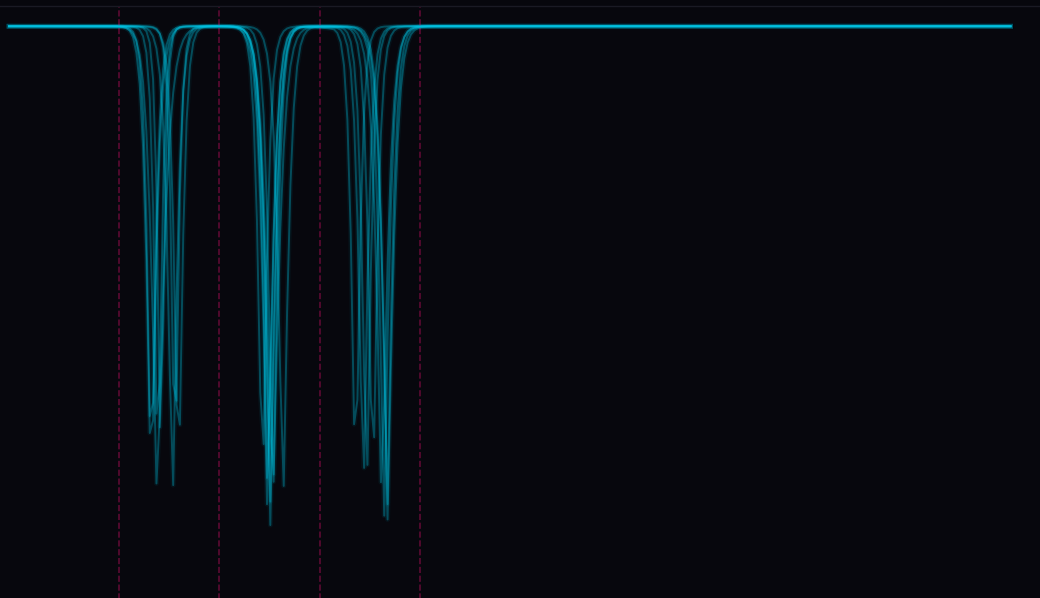

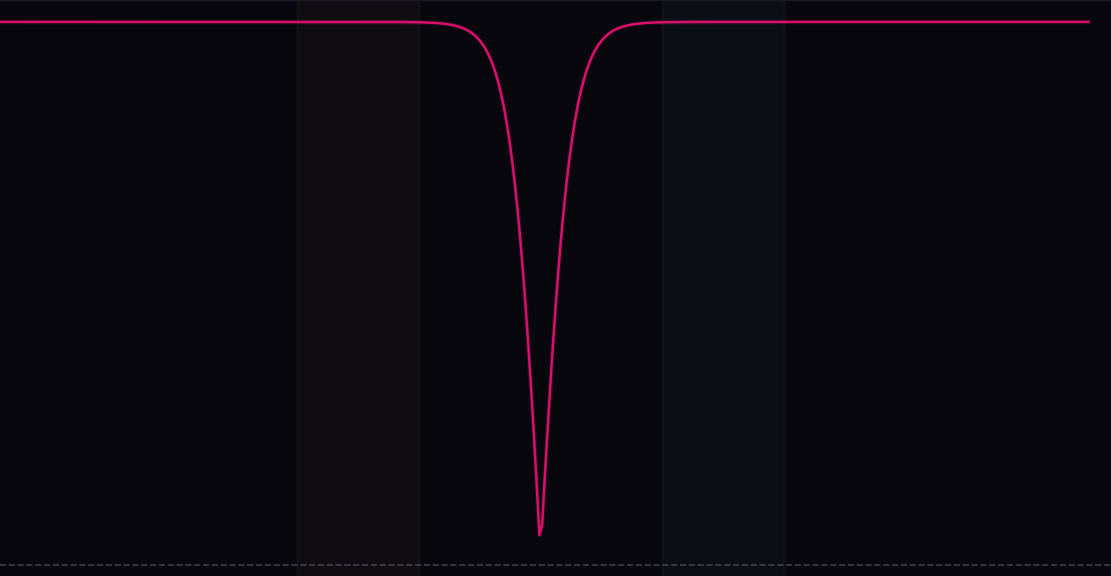

Along a fixed ray, the network moves through a sequence of activation regions. Inside each region the network is just an affine map, so the logit difference changes linearly with radius. The visible kinks mark where the ray crosses into a new region. Confidence dips near those crossings, but stays high almost everywhere else, importantly including far from the data.

Bayesian

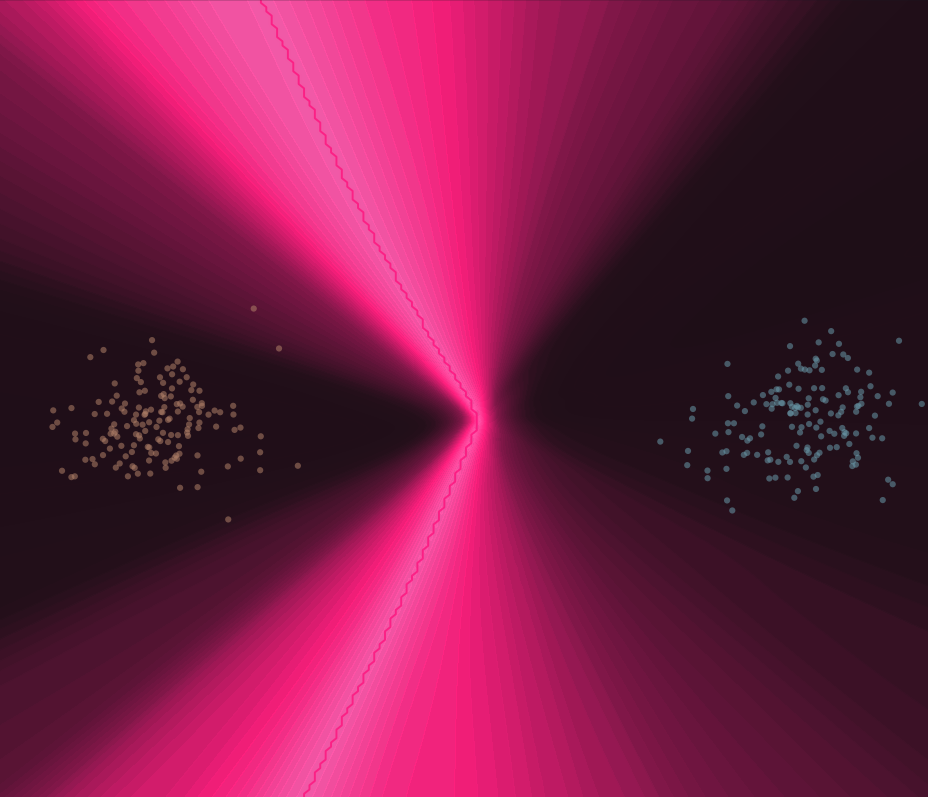

A way of quantifying uncertainty is interesting in many ways, and that is why looking at Bayesian statistics and Bayesian neural networks is compelling,you could better calibrate models. The intuition around ReLU networks as regards to making a model more uncertain where it should be uncertain is what the Being Bayesian paper is about. When you have out-of-distribution data, and since ReLU networks are piecewise linear, the regions collapse into each other. A data point the model has not seen gets pushed into one of these regions, and you end up with very large logit numbers, which reads as high confidence,even though the model should probably be uncertain with such exotic data.

Looking into how to mitigate this overconfidence problem in ReLU networks is what led me to this paper and deeper into the topic. When you look into Bayesian neural networks and applying Bayesian statistics methods, you also often come across very nice visualizations and intuition-building. I think it fits this project well, and it is something I want to explore more.

MAP quickly returns to near-1 confidence away from the data. The last-layer Laplace approximation relaxes toward 0.5 and shows wider predictive spread in regions with little or no training data.

Graphics

Graphics has always been something I find genuinely interesting. Reading about different graphics APIs, techniques, the math behind it, visualization and scientific visualization. People who work in graphics tend to be very good programmers, you see that with game developers, scientific visualization people, and those doing general purpose GPU programming.

I have dabbled into OpenGL here and there over the past year, and using it to build the visualizations here felt like a natural fit. WebGPU is something I am also interested in, maybe that is something for the future. We will see.

What I find interesting about graphics APIs specifically is that they make you think about memory in a concrete way. You have your data on the GPU, not the CPU, and the API is how you put it there and manipulate it. Once you understand vertex buffers, vertex array objects, and buffer objects, it clicks. It is the same idea across OpenGL and all the other graphics APIs: your data lives on an accelerator, and you control it explicitly.

Live OpenGL viewer training a ReLU network. The joint activation regions reorganize as training progresses, and the decision boundary gradually locks into place.

Resources

ICML 2020: Being Bayesian, Even Just a Bit, Fixes Overconfidence in ReLU Networks

UAI 2021: Learnable Uncertainty under Laplace Approximations

Reading Group #11 - Being Bayesian, Even Just a Bit, Fixes Overconfidence in ReLU Networks

Gradient descent, how neural networks learn | Deep Learning Chapter 2

Neural networks: training with backpropagation.

Writing an LLM from scratch, part 20 -- starting training, and cross entropy loss

Cross-Entropy Loss Function Tutorial

100 numpy exercises

Practical Linear Algebra with NumPy

The Strange Practices at The Broadcaster's Inn - Broadcasting in NumPy

I don't like NumPy

Siu Kwan Lam - Numba v2: Towards a SuperOptimizing Python Compiler | SciPy 2025

A Detailed Guide to Numba

Jendrik Illner

Victor Gordan

LegNeato

The Graphics Guy

Elie Michel

Maxime

nrougier

XoR

Jensen OpenGL

TPU

GPU glossary by Modal

WebGPU resources

The Book of Shaders

Vector Graphics on GPU

UofM Introduction to Computer Graphics - COMP 3490

Opinion on GPU APIs